No Downtime Database Migrations

Introduction

Back at my last job, we successfully migrated from MongoDB to Cassandra without any downtime. We did two webinars with Datastax at the time (I am now a Datastax employee). Our first webinar was a general overview on the migration. The second, we covered some of the lessons we learned after being in production with Cassandra for a while. We touched on our migration process, but didn’t get deep into the details. This post will discuss the strategy, it’s goals, and what we learned along the way. The strategy applies to any database migration, and is not scoped only to moving between databases either.

Requirements

-

No downtime. Totally Unacceptable! This rules out taking the site down, doing some migrations, and then deploying the new code, aka the easy way. One thing to consider about this approach is that if anything goes wrong, you may end up with more downtime than you initially anticipated. There’s nothing fun about watching 1 hour of downtime stretch into 6.

-

No race conditions. This means we couldn’t run some super fast query or script, then deploy, and really hope that we didn’t miss anything in the tiny window between migrate and deploy. Showing errors or incorrect information to a percentage of customers is unacceptable.

The Process

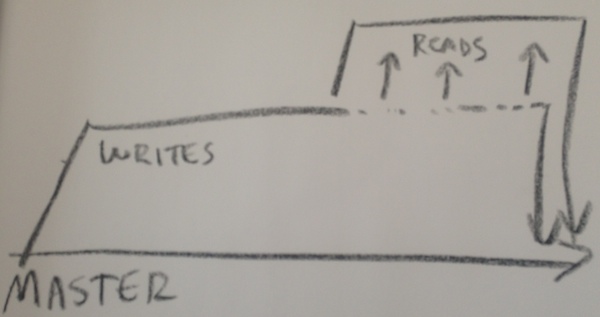

First we create a new branch where we’re going to be doing our initial cut at our data model. I refer to this as our writes branch, because we’re only writing new data, never reading it. This means we can put it in production and never show incorrect data. If we’re creating new tables, or adding new fields to existing ones, we do that at this stage.

Once we’re finished with our writes branch, we create the reads branch off the writes branch. Do not deploy your first branch into production, rather, wait until you’re finished with the reads branch. Doing it this way serves two purposes:

- It validates your data model

- Limits the amount of time your writes branch is in production by itself

Here’s a diagram which was clearly made by a toddler:

By developing a reads branch in tandem with the writes branch means it’s less likely to deploy the wrong data model. With a database like Cassandra, you don’t model your database like a RDBMS, you model it to answer specific queries. By waiting to deploy your writes branch until you’re finished with the reads, you ensure that you don’t discover mistakes with your new data model that’s already in production.

When you’re working on your reads branch, and you realize you need to change your data model, the process is this:

- Commit what I’m working on in my reads branch

- Checkout my writes branch

- Make the fix

- Commit to the writes branch

- Checkout reads branch again

- Merge writes branch into reads branch.

I’ve corrected minor issues with data modeling in less than an hour. It’s far preferable than needing to fix an error already in production.

Conclusion

Our first couple migrations with this process felt a little strange. Once something is written and I feel it’s “done” I get an urge to deploy it to production. Resisting that urge has saved me dozens of hours. I have caught mistakes in many of my writes branches.

Fortunately git makes this ridiculously easy. Branches are cheap and can be used rather cavalierly. Once this became part of our normal workflow, we found our deployments to be relatively stress free, since we were extremely confident and weren’t under a sort schedule to perform the migration. Over the course of 2 months, we experienced zero downtime, zero rollbacks, and we had a perfect track record of data modeling. It’s hard to accurately express how confident this process made us with our migration.

The downside? It requires a little more planning. Honestly though, thinking about the next few days before your start coding is a GOOD THING.

Hopefully by now you’re convinced that this is the best idea ever, and you will immediately mix it into your day to day workflow. When you do, please let me know! I’m @rustyrazorblade on Twitter.

If you found this post helpful, please consider sharing to your network. I'm also available to help you be successful with your distributed systems! Please reach out if you're interested in working with me, and I'll be happy to schedule a free one-hour consultation.